- Blog

- More player models controls

- Tron legacy movie online

- Sagem modem driver download

- Where was the star spangled banner song written

- Dell xps 13 2012

- How to reinstall mac os 2017

- Pcsx2 emulator running too fast

- Multiple linear regression equation example

- Hp laserjet p2055dn printer specification

- Best pdf to epub

- How to get pdf file to powerpoint

- How to format sd card on canon camera

- Disable adobe genuine software integrity mac 2018

- Dockerfile alpine linux imagemagick rails

- Star wars the force awakens full movie megashare9

- Autodesk memento how does it work

- Refurbished macbook pro 2013

- Best free math websites for elementary students

- Xcom 2 demo playable demo download

- How to install android usb driver windows 10

- Nasir album review

- Free farmville game download

- Sims 4 cas mods columns 2018

- Gmail client for mac with calendar service

- Print management find mac address of a printer

- Hustler spank inferno torrent

- Email extractor pro license key

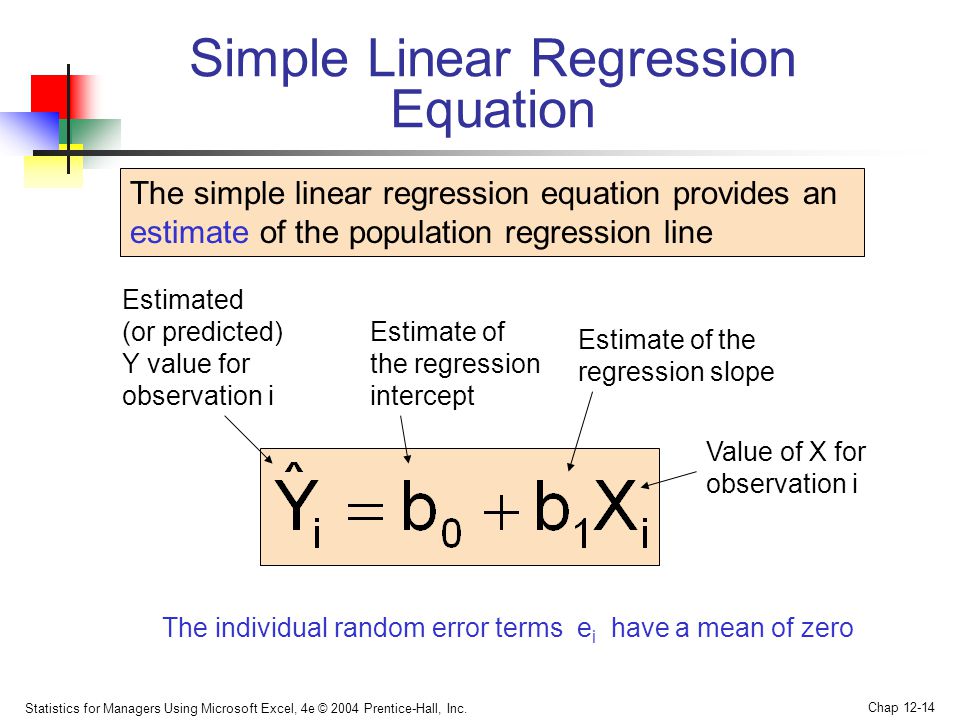

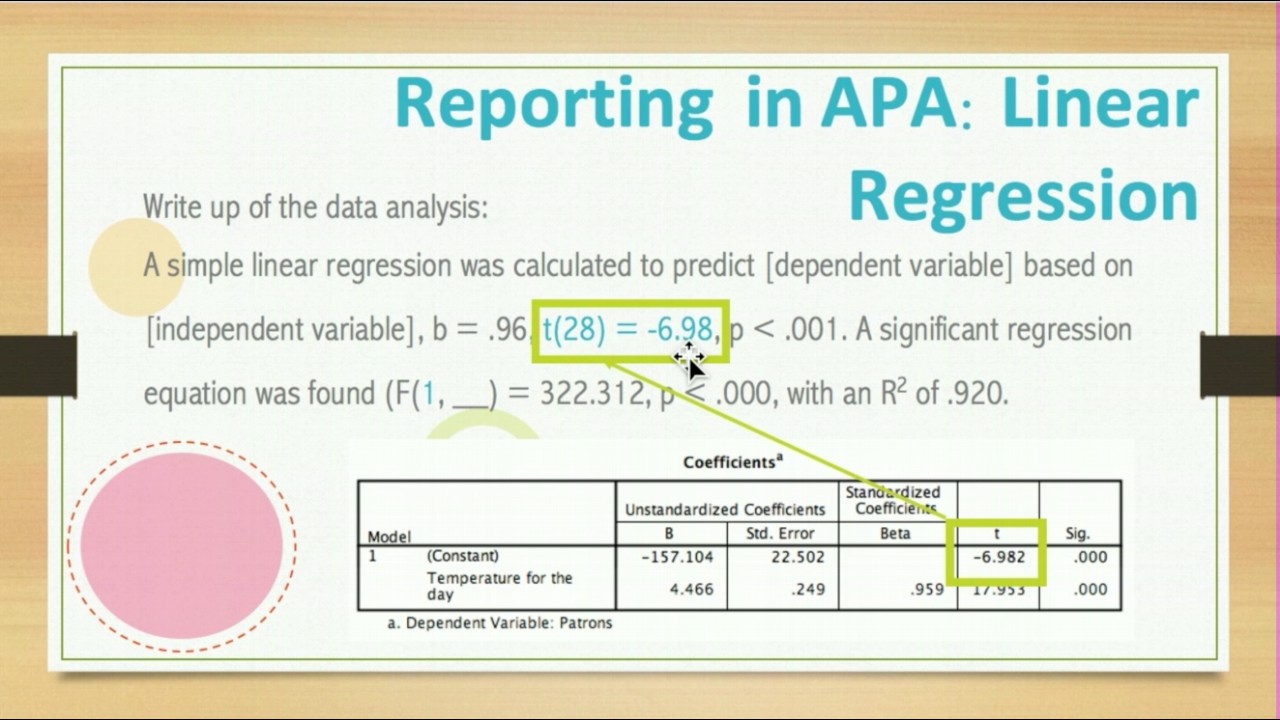

The slope of the relationship between the part of a predictor variable independent of other predictor variables and the criterion is its partial slope. Similarly, if they differed by 0.5, then you would predict they would differ by (0.50)(0.54) = 0.27. If two students had the same SAT and differed in HSGPA by 2, then you would predict they would differ in UGPA by (2)(0.54) = 1.08. Since the regression coefficient for HSGPA is 0.54, this means that, holding SAT constant, a change of one in HSGPA is associated with a change of 0.54 in UGPA'. It represents the change in the criterion variable associated with a change of one in the predictor variable when all other predictor variables are held constant. This means that the regression coefficient for HSGPA is the slope of the relationship between the criterion variable and the part of HSGPA that is independent of (uncorrelated with) the other predictor variables. Notice that the slope (0.541) is the same value given previously for b 1 in the multiple regression equation. The linear regression equation for the prediction of UGPA by the residuals is The following equation is used to predict HSGPA from SAT: This slope is the regression coefficient for HSGPA. The final step in computing the regression coefficient is to find the slope of the relationship between these residuals and UGPA. The correlation between HSGPA.SAT and SAT is necessarily 0. These residuals are referred to as HSGPA.SAT, which means they are the residuals in HSGPA after having been predicted by SAT.

These errors of prediction are called "residuals" since they are what is left over in HSGPA after the predictions from SAT are subtracted, and represent the part of HSGPA that is independent of SAT. In this example, the regression coefficient for HSGPA can be computed by first predicting HSGPA from SAT and saving the errors of prediction (the differences between HSGPA and HSGPA'). Interpretation of Regression CoefficientsĪ regression coefficient in multiple regression is the slope of the linear relationship between the criterion variable and the part of a predictor variable that is independent of all other predictor variables. Note that R will never be negative since if there are negative correlations between the predictor variables and the criterion, the regression weights will be negative so that the correlation between the predicted and actual scores will be positive.

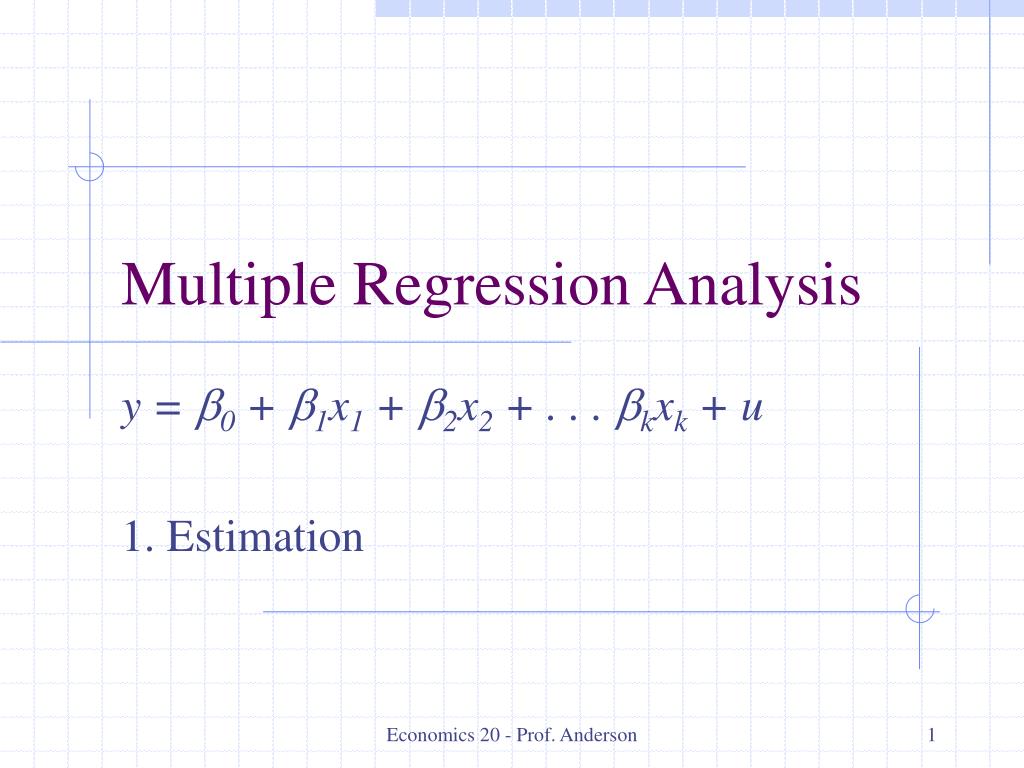

In this example, it is the correlation between UGPA' and UGPA, which turns out to be 0.79. The multiple correlation (R) is equal to the correlation between the predicted scores and the actual scores. The values of b (b 1 and b 2) are sometimes called " regression coefficients" and sometimes called " regression weights." These two terms are synonymous. Table 1 shows the data and predictions for the first five students in the dataset. In other words, to compute the prediction of a student's University GPA, you add up (a) their High-School GPA multiplied by 0.541, (b) their SAT multiplied by 0.008, and (c) 0.540. For these data, the best prediction equation is shown below: Where UGPA' is the predicted value of University GPA and A is a constant. As in the case of simple linear regression, we define the best predictions as the predictions that minimize the squared errors of prediction. That is, the problem is to find the values of b 1 and b 2 in the equation shown below that give the best predictions of UGPA. The basic idea is to find a linear combination of HSGPA and SAT that best predicts University GPA (UGPA). For example, in the SAT case study, you might want to predict a student's university grade point average on the basis of their High-School GPA (HSGPA) and their total SAT score (verbal + math). In multiple regression, the criterion is predicted by two or more variables. In simple linear regression, a criterion variable is predicted from one predictor variable.